Duplicate Content

What Is Duplicate Content?

Duplicate content is content that’s similar or exact copies of content on other websites or on different pages on the same website. Having large amounts of duplicate content on a website can negatively impact Google rankings.

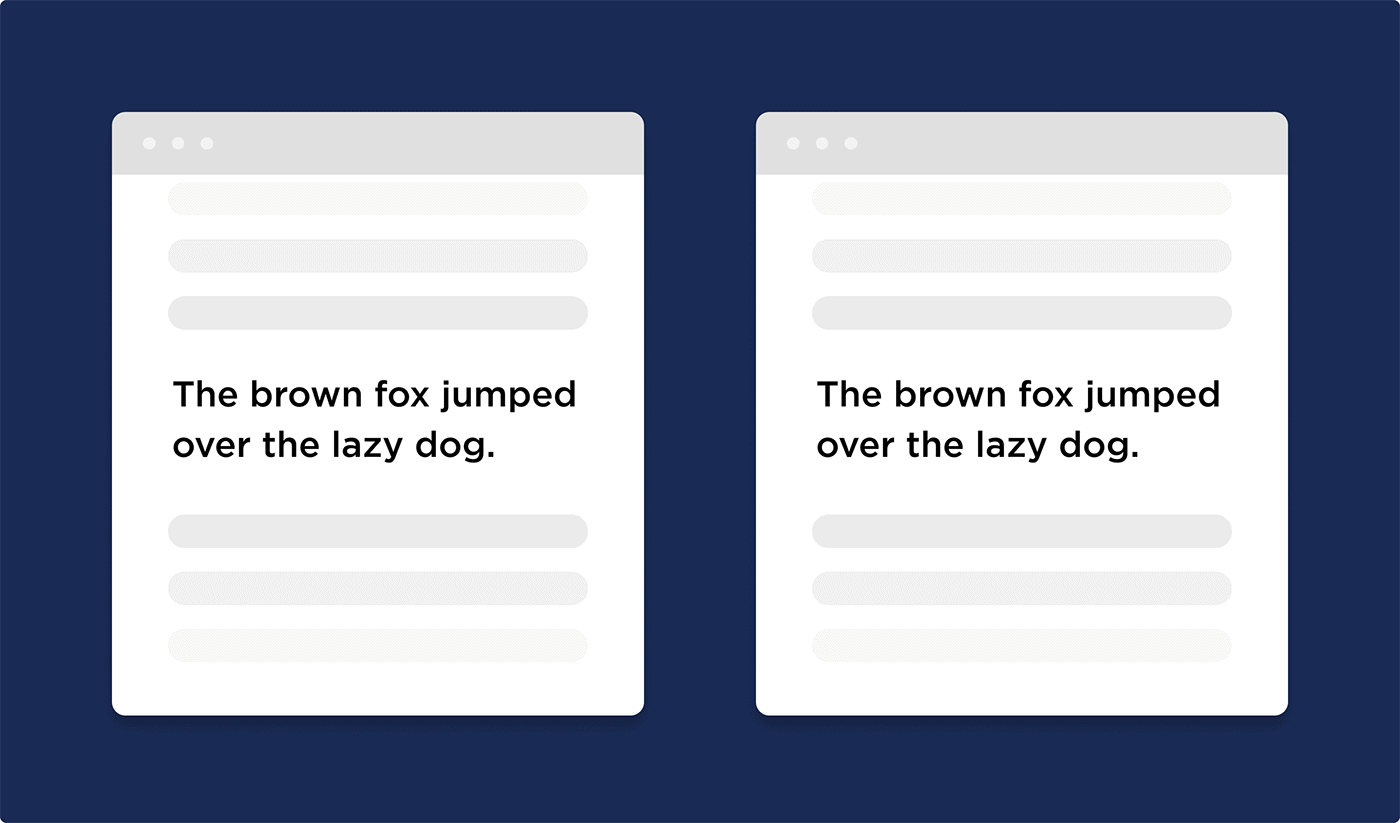

In other words:

Duplicate content is content that’s word-for-word the same as content that appears on another page.

But “Duplicate Content” also applies to content that’s similar to other content… even if it’s slightly rewritten.

How Does Duplicate Content Impact SEO?

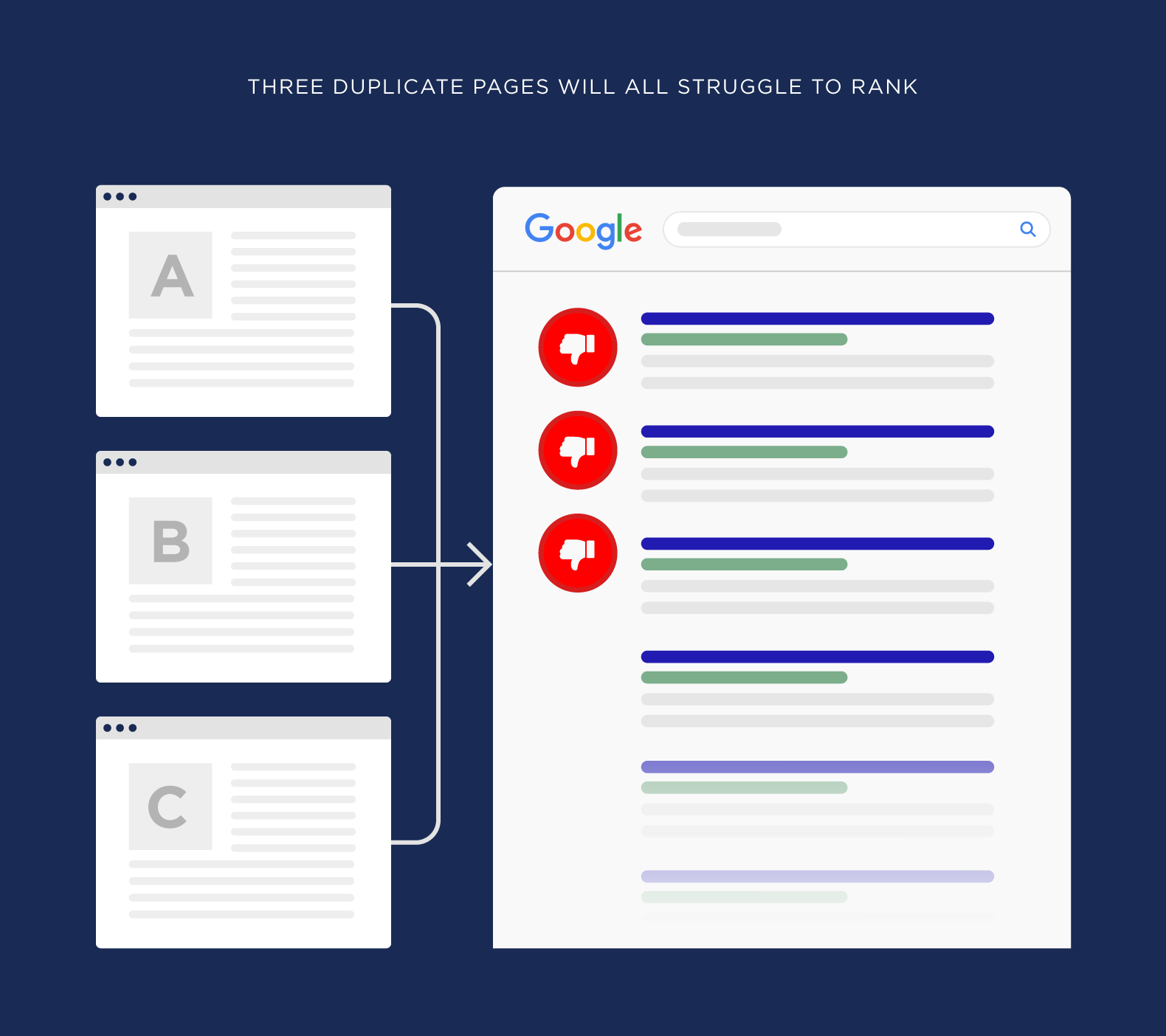

In general, Google doesn’t want to rank pages with duplicate content.

In fact, Google states that:

“Google tries hard to index and show pages with distinct information”.

So if you have pages on your site WITHOUT distinct information, it can hurt your search engine rankings.

Specifically, here are the three main issues that sites with lots of SEO duplicate content run into.

Less Organic Traffic: This is pretty straightforward. Google doesn’t want to rank pages that uses content that’s copied from other pages in Google’s index.

(Including pages on your own website)

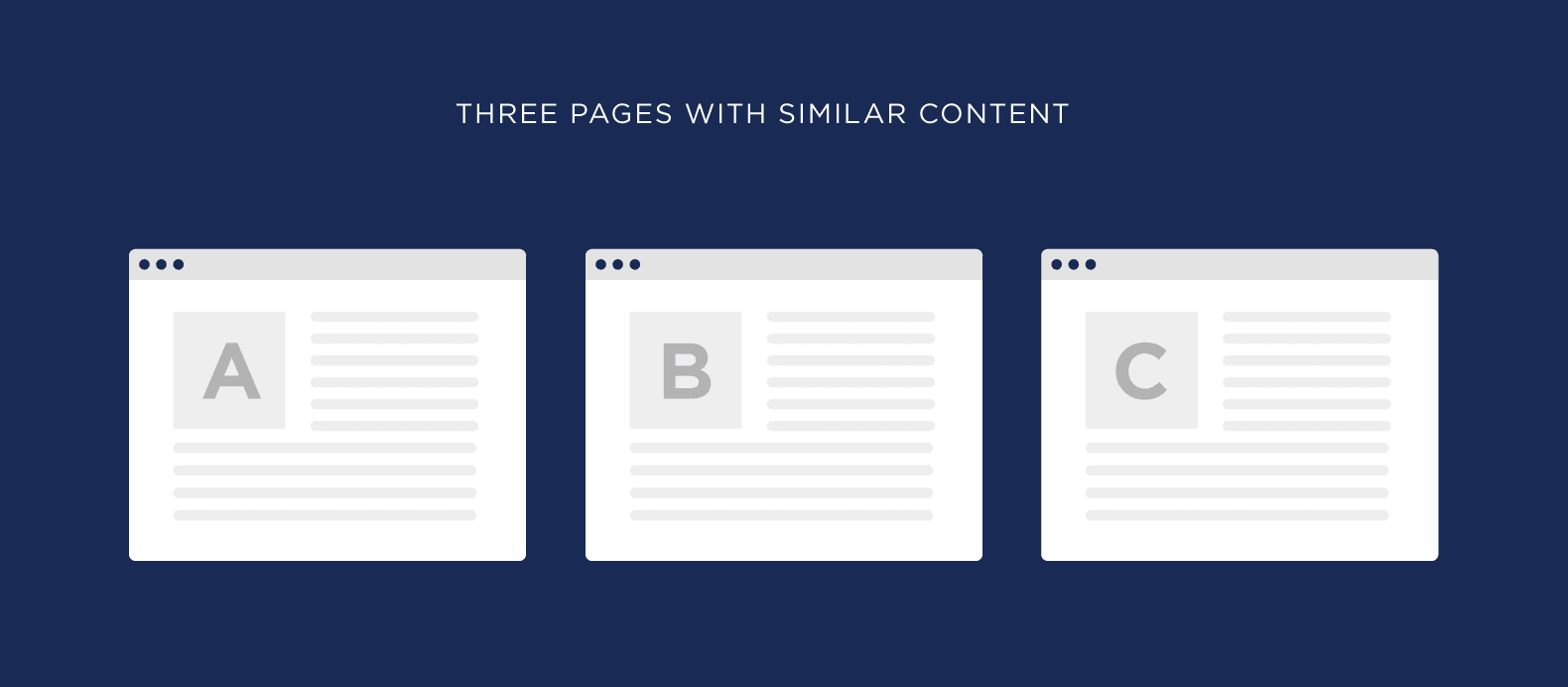

For example, let’s say that you have three pages on your site with similar content.

Google isn’t sure which page is the “original”. So all three pages will struggle to rank.

Penalty (Extremely Rare): Google has said that content duplication can lead to a penalty or complete deindexing of a website.

However, this is super rare. And it’s only done in cases where a site is purposely scraping or copying content from other sites.

So if you have a bunch of duplicate pages on your site, you probably don’t need to worry about a “duplicate content penalty.”

Fewer Indexed Pages: This is especially important for websites with lots of pages (like ecommerce sites).

Sometimes Google doesn’t just downrank duplicate content. It actually refuses to index it.

So if you have pages on your site that aren’t getting indexed, it could be because your crawl budget is wasted on duplicate content.

Best Practices

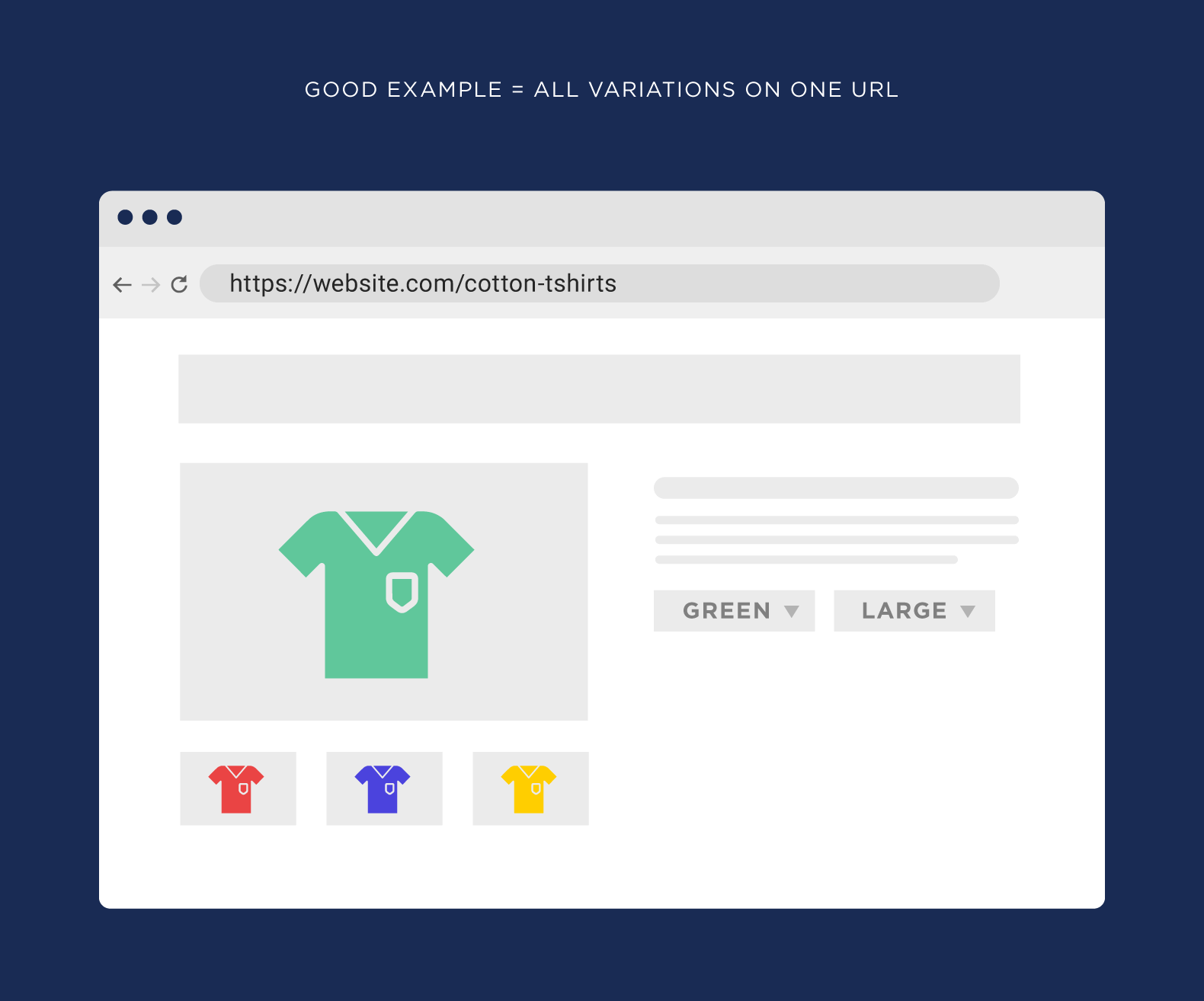

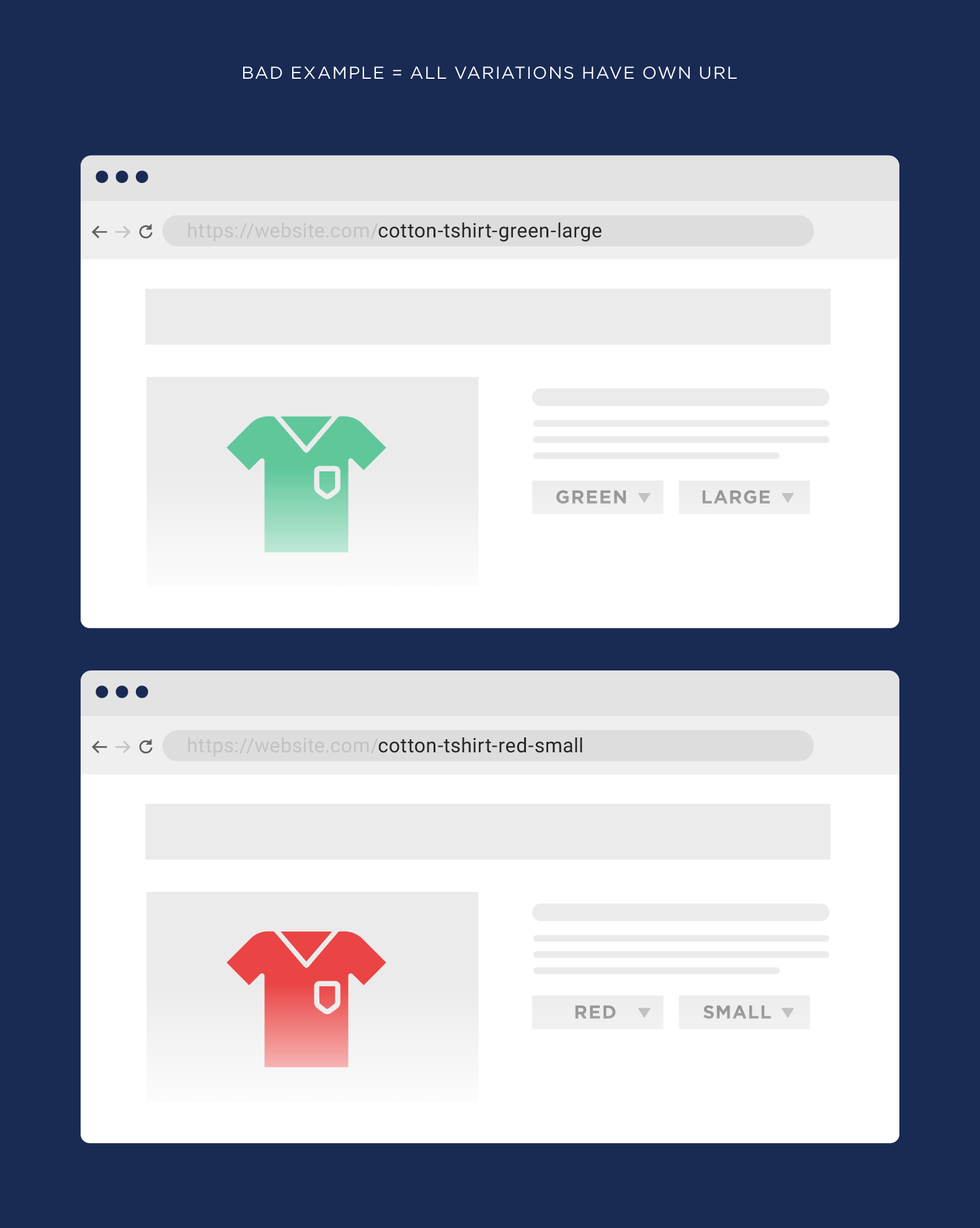

Watch For Same Content on Different URLs

This is the most common reason that duplicate content issues pop up.

For example, let’s say that you run an ecommerce site.

And you have a product page that sells t-shirts.

If everything is setup right, every size and color of that t-shirt will still be on the same URL.

But sometimes you’ll find that your site creates a new URL for every different version of your product… which results in THOUSANDS of duplicate content pages.

Another example:

If your site has a search function, those search result pages can get indexed too. Again, this can easily add 1,000+ pages to your site. All of which contain duplicate content.

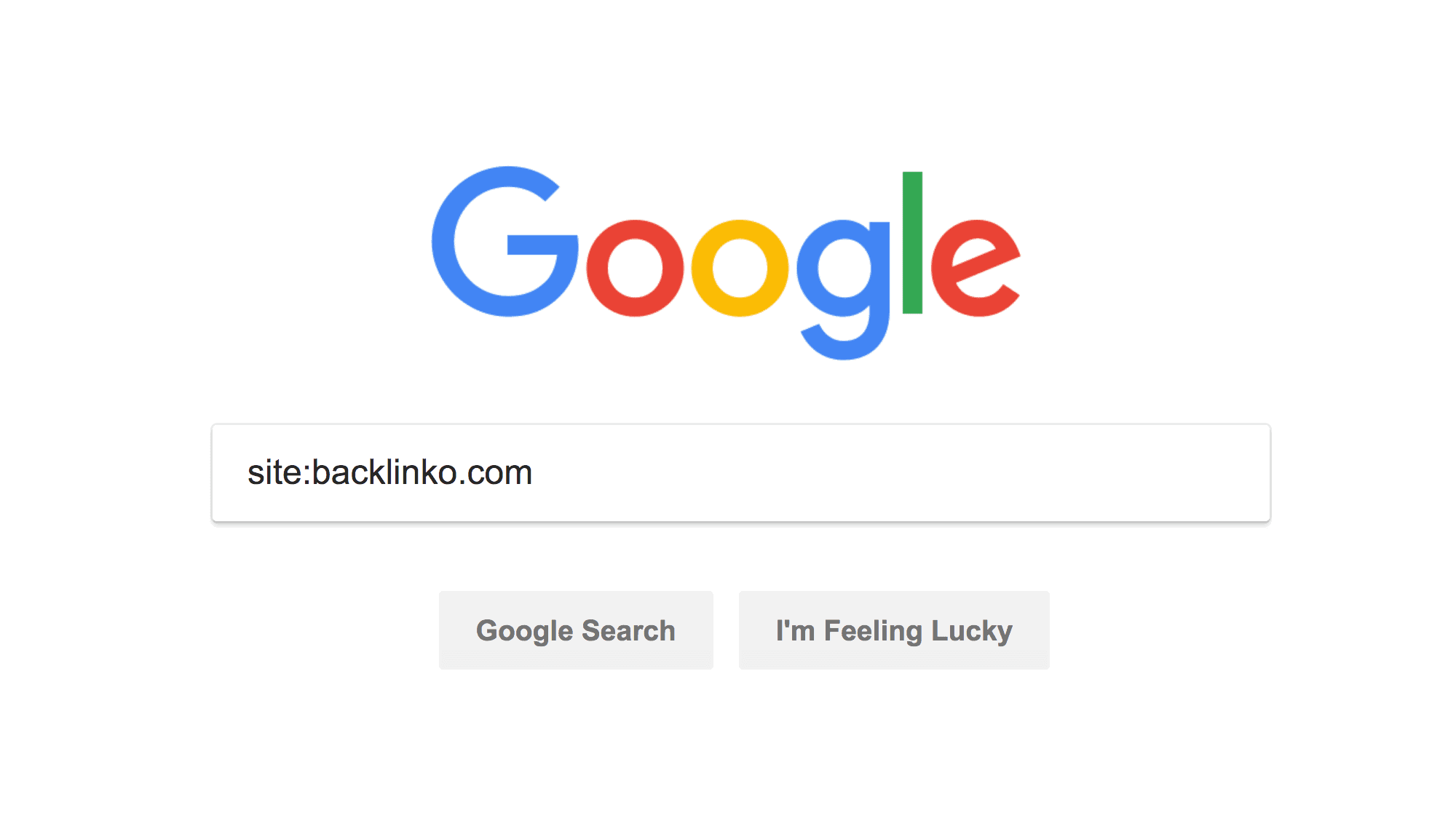

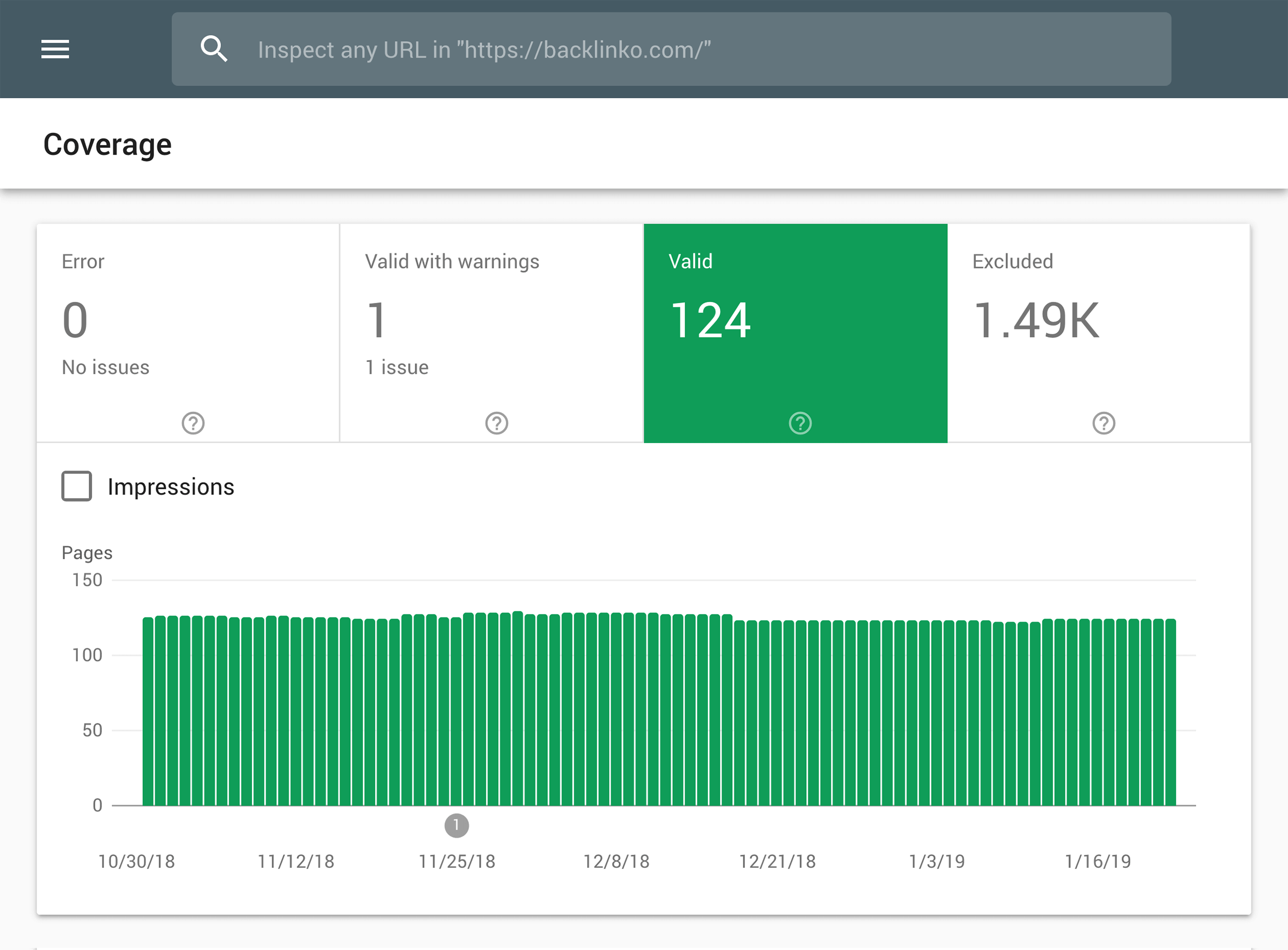

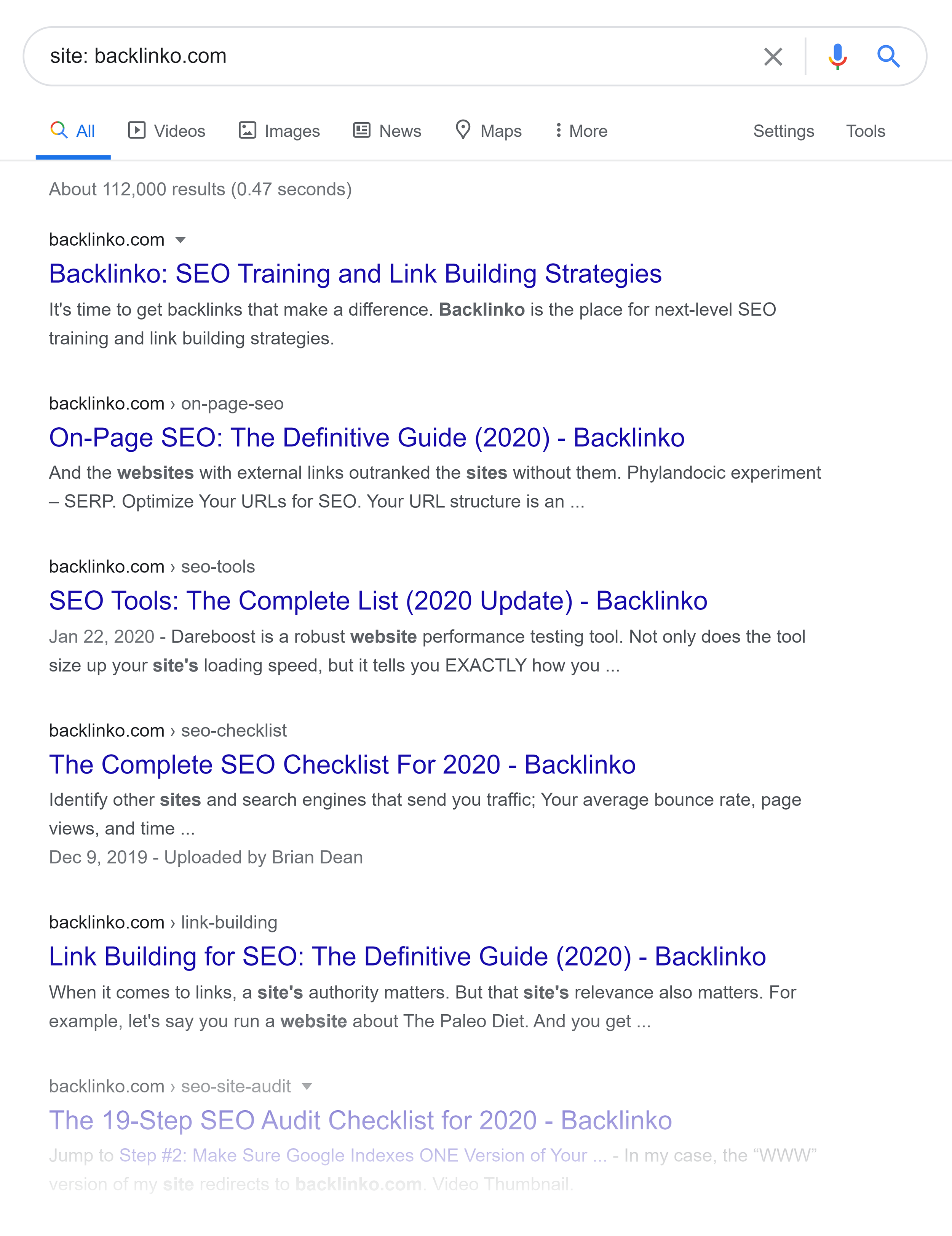

Check Indexed Pages

One of the easiest ways to find duplicate content is to take a look at the number of pages from your site that are indexed in Google.

You can do this by searching for site:example.com in Google.

Or check out your indexed pages in the Google Search Console.

Either way, this number should line up with the amount of pages that you manually created.

For example, Backlinko has 112 pages indexed:

Which is the amount of pages that we made.

If that number was 16,000 or 160,000 we’d know that lots of pages were getting added automatically. And those pages would likely contain significant amounts of duplicate content.

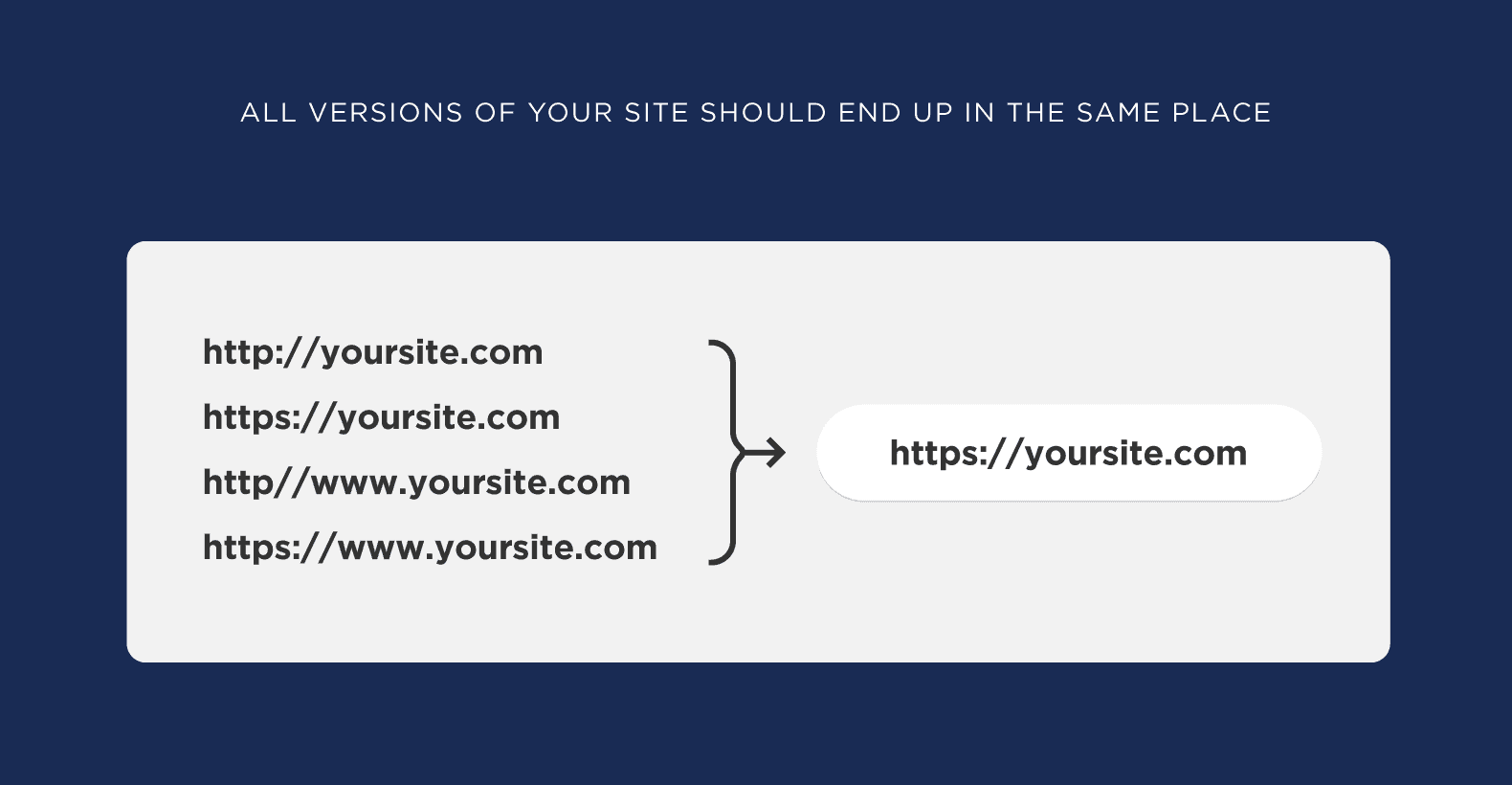

Make Sure Your Site Redirects Correctly

Sometimes you don’t just have multiple versions of the same page… but of the same SITE.

Although rare, I’ve seen it happen in the wild many times.

This issue crops up when the “WWW” version of your website doesn’t redirect to the “non-WWW” version.

(Or vice versa)

This can also happen if you switched your site over to HTTPS… and didn’t redirect the HTTP site.

In short: all the different versions of your site should end up on the same place.

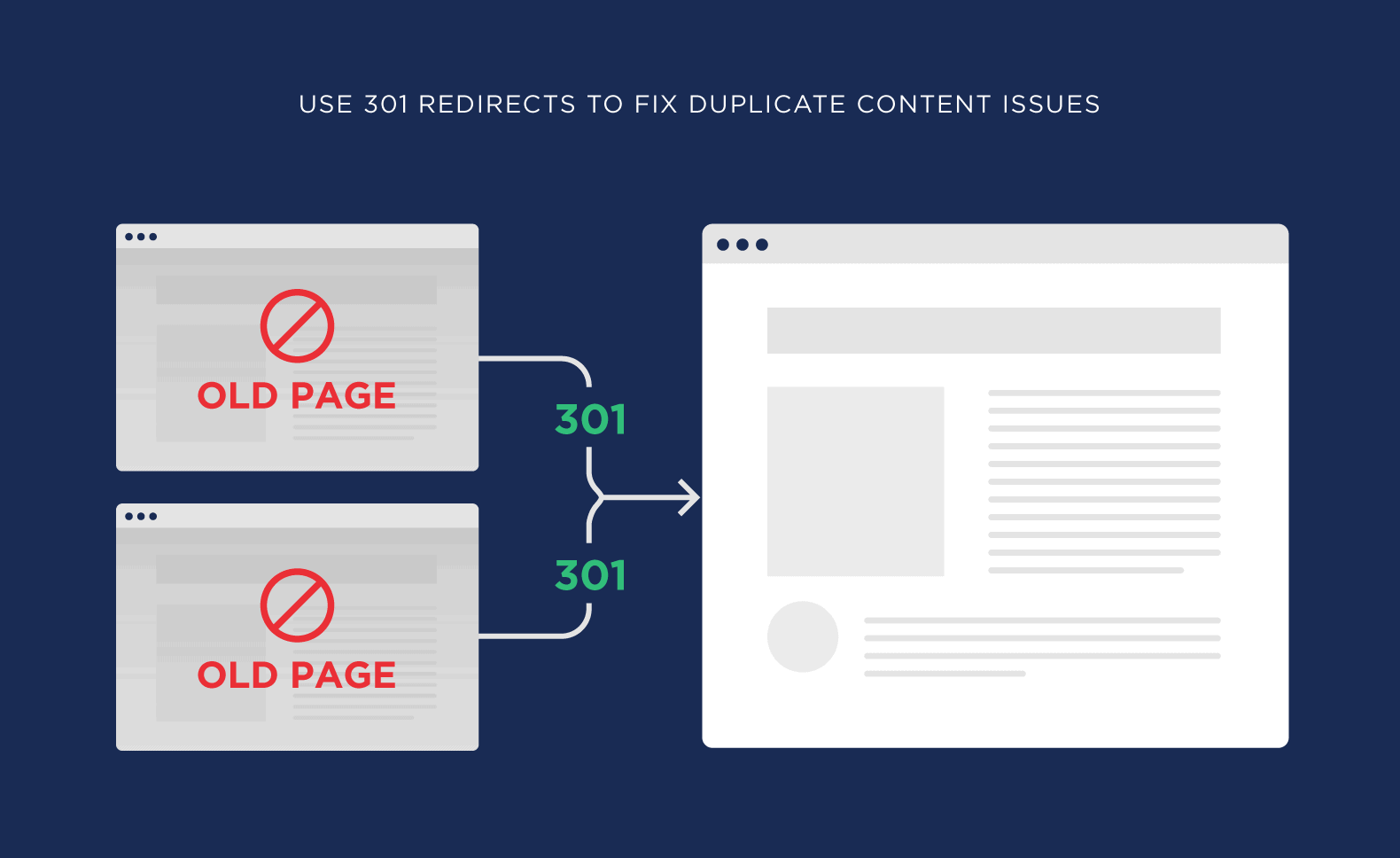

Use 301 Redirects

301 redirects are the easiest way to fix duplicate content issues on your site.

(Besides deleting pages altogether)

So if you found a bunch of duplicate content pages on your site, redirect them back to the original.

Once Googlebot stops by, it will process the redirect and ONLY index the original content.

(Which can help that original page start to rank)

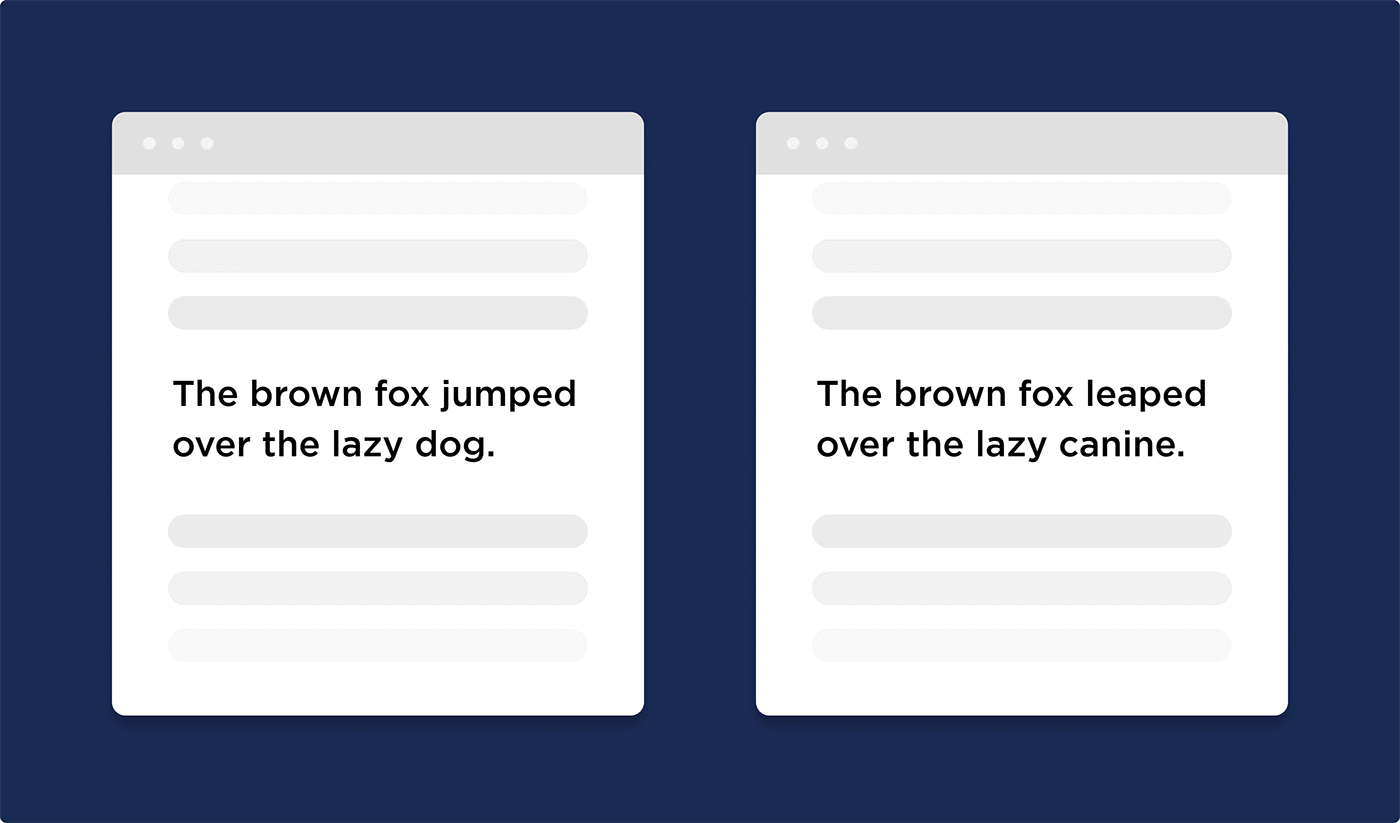

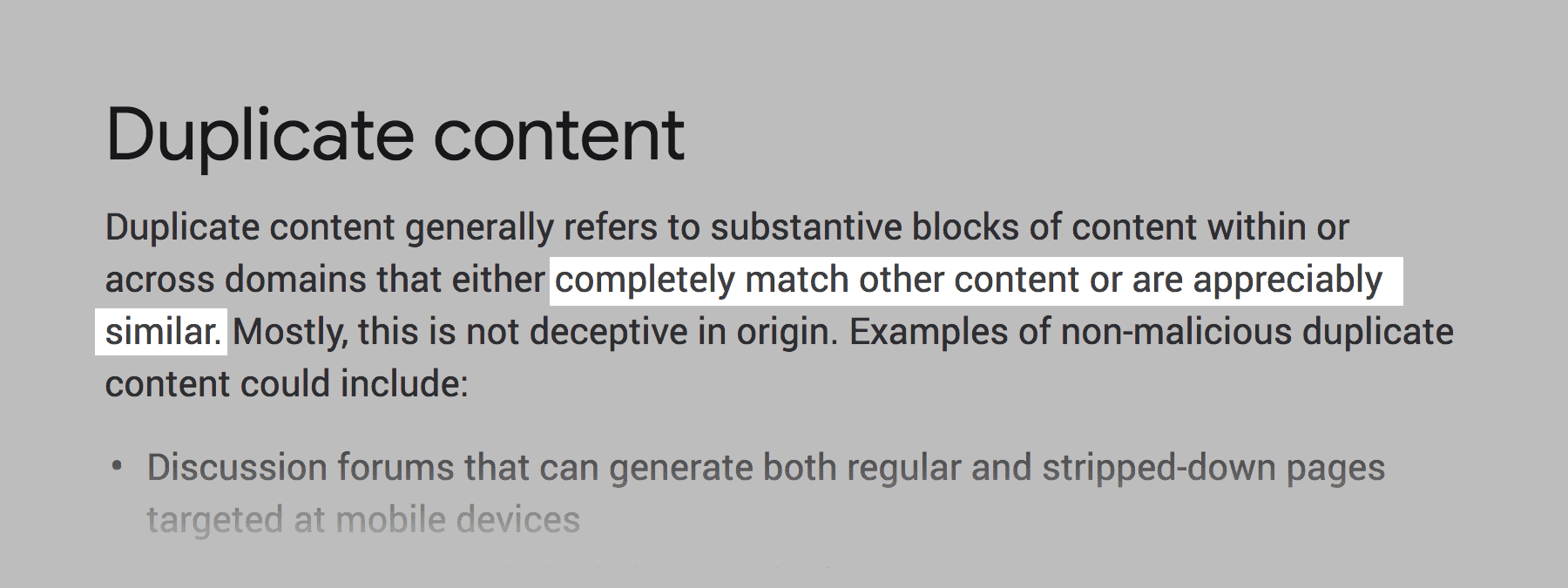

Keep An Eye Out For Similar Content

Duplicate content doesn’t ONLY mean content that’s copied word-for-word from somewhere else.

In fact, Google defines duplicate content as:

So even if your content is technically different than what’s out there, you can still run into duplicate content problems.

This isn’t an issue for most sites. Most sites have a few dozen pages. And they write unique stuff for every page.

But there are cases where “similar” duplicate content can crop up.

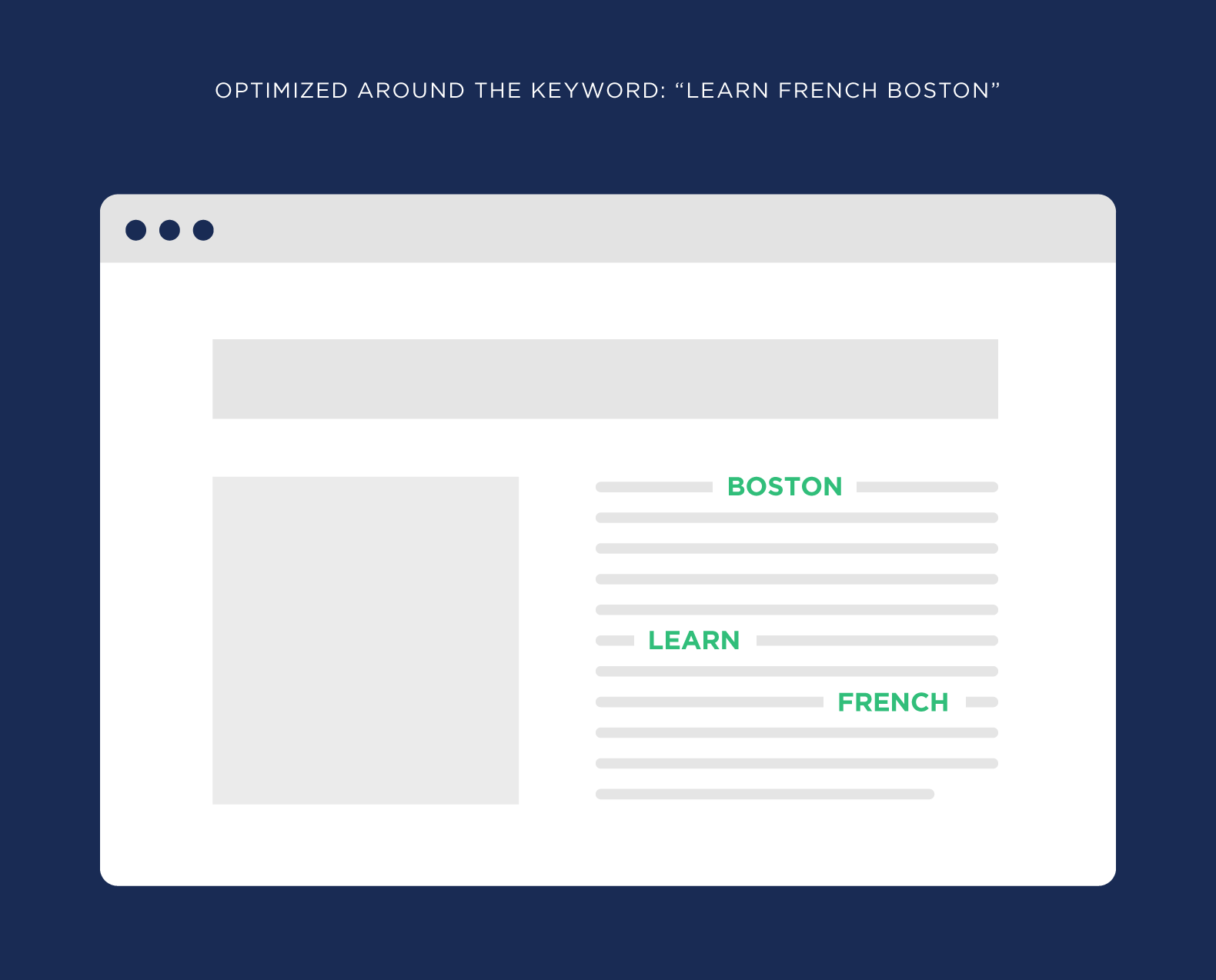

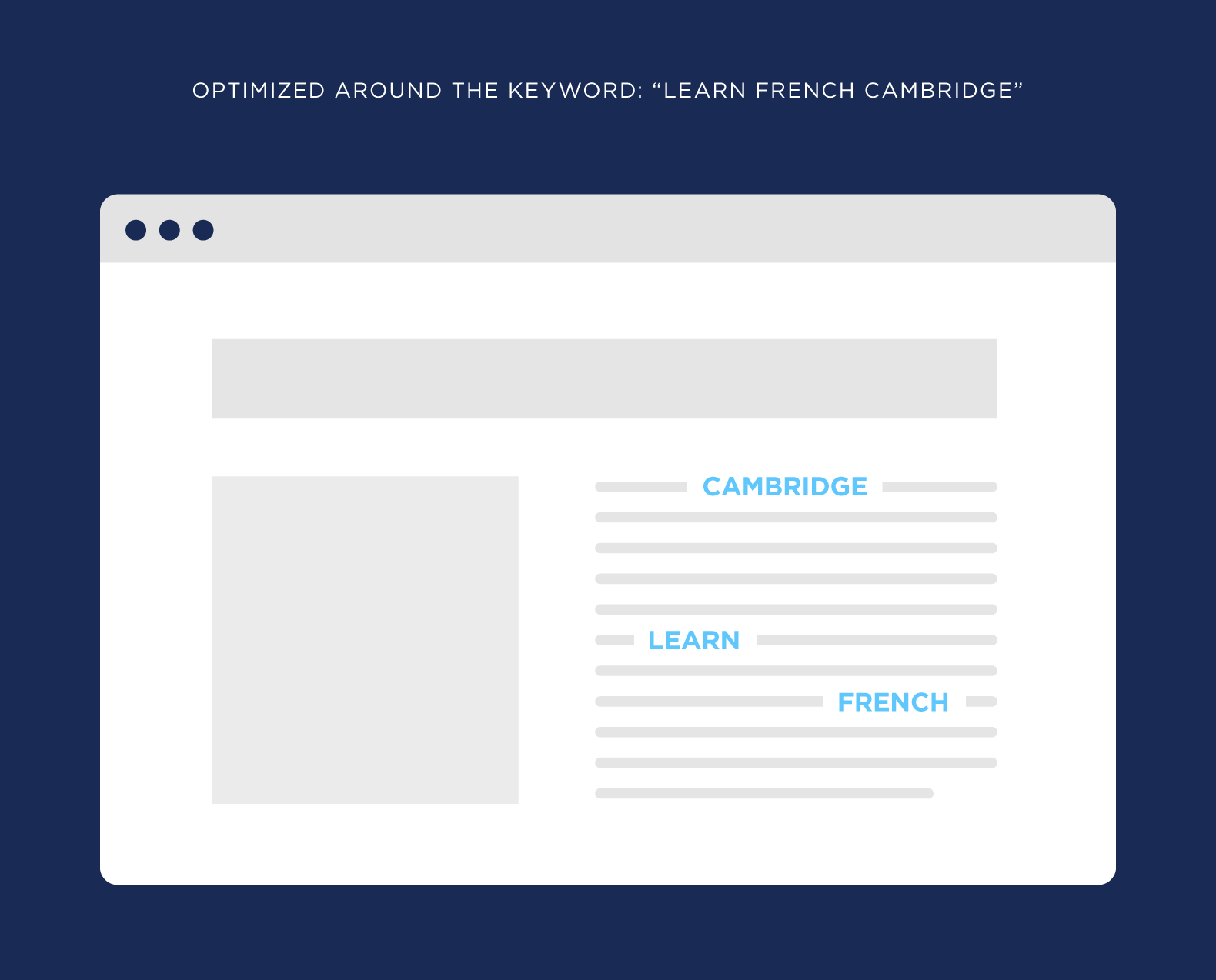

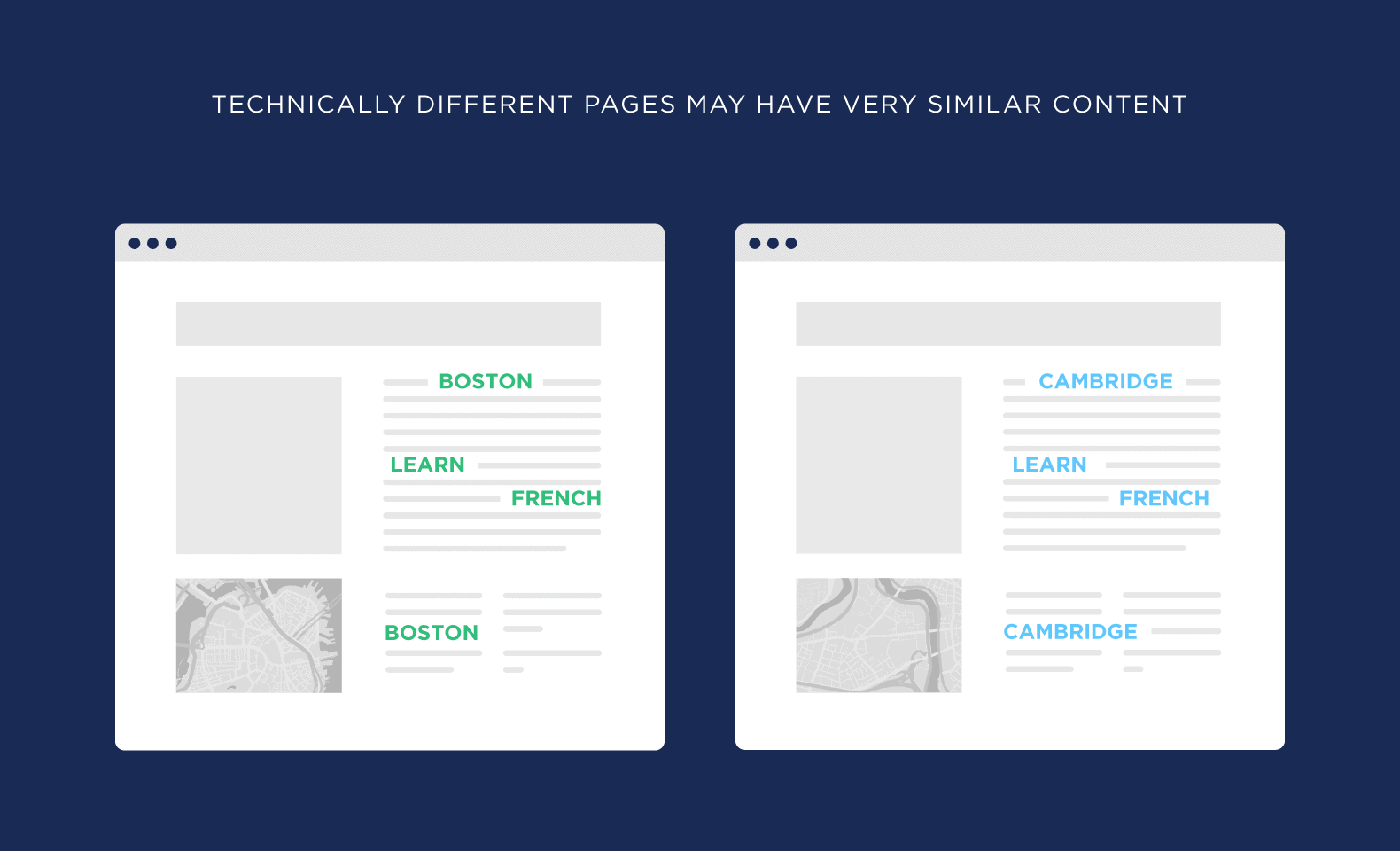

For example, let’s say you run a website that teaches people how to speak French.

And you serve the greater Boston area.

Well, you might have one services page optimized around the keyword: “Learn French Boston”.

And another page that’s trying to rank for “Learn French Cambridge”.

Sometimes the content will technically be different. For example, one page has a location listed for the Boston location. And the other page has the Cambridge address.

But for the most part, the content is super similar.

That’s technically duplicate content.

Is it a pain to write 100% unique content for every page on your site? Yup. But if you’re serious about ranking every page on your site, it’s a must.

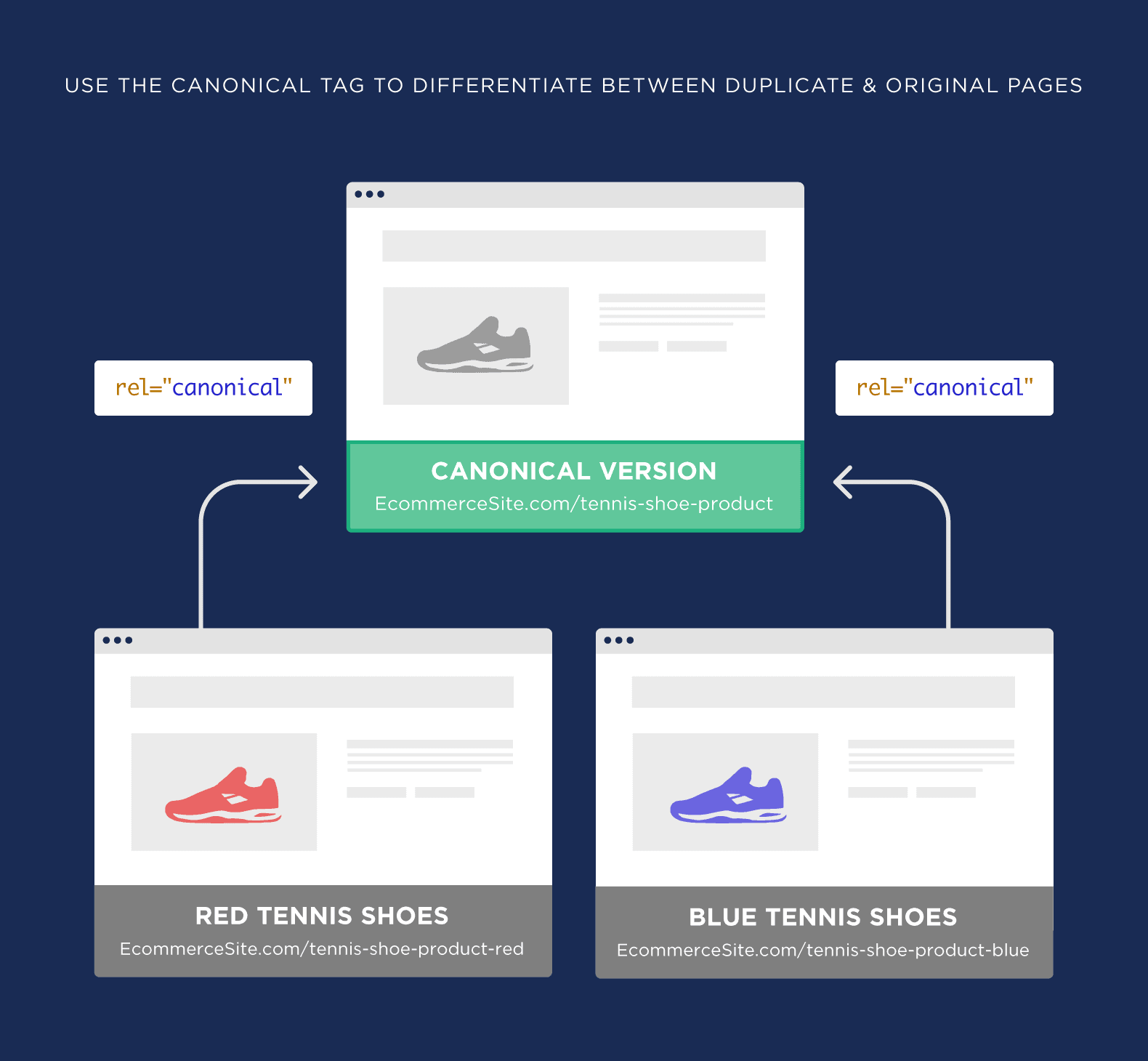

Use the Canonical Tag

The rel=canonical tag tells search engines:

“Yes, we have a bunch of pages with duplicate content. But THIS page is the original. You can ignore the rest”.

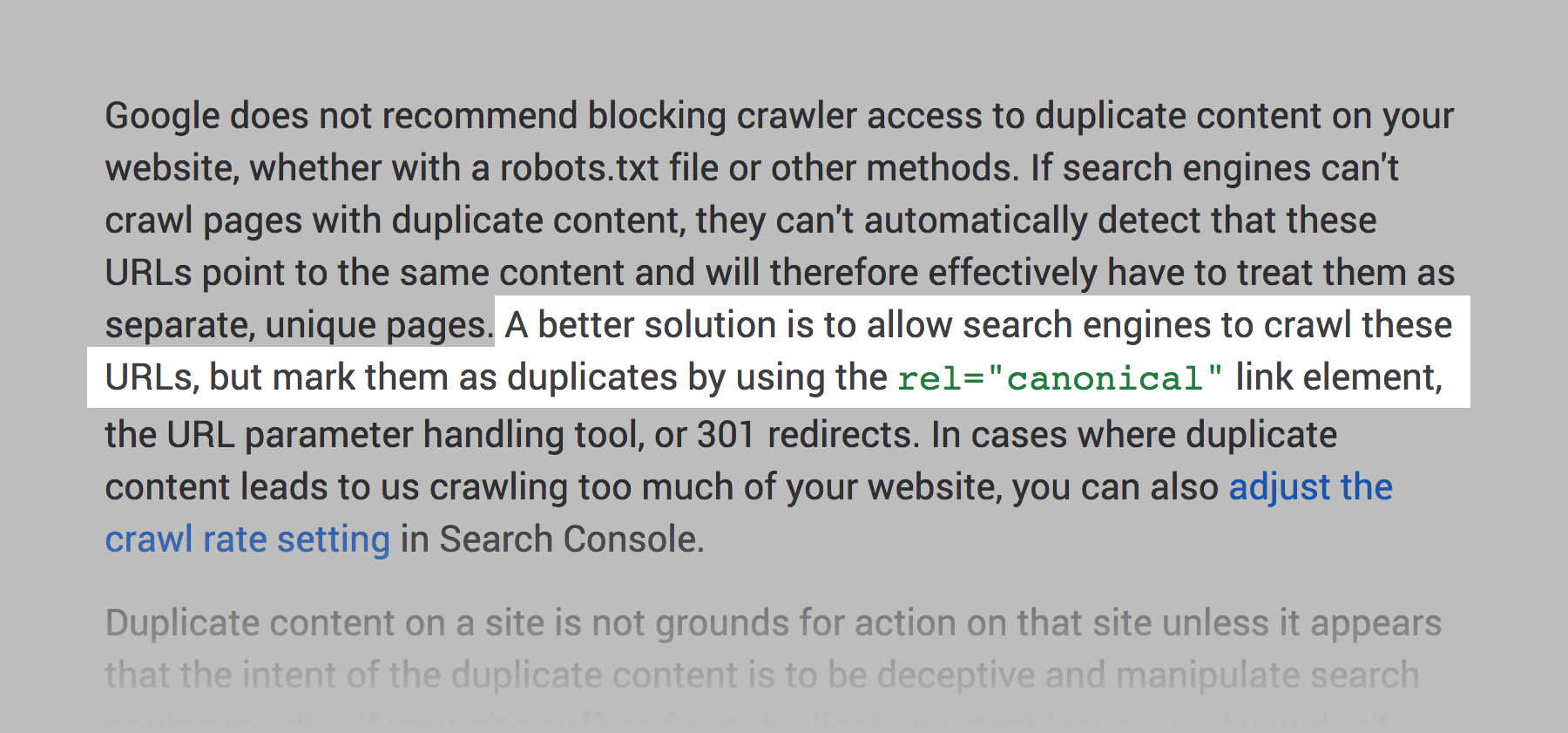

Google has said that a canonical tag is better than blocking pages with duplicate content.

(For example, blocking Googlebot using robots.txt or with a noindex tag in your web page HTML)

So if you find a bunch of pages on your site with duplicate content you want to either:

- Delete them

- Redirect them

- Use the canonical tag

Use a Tool

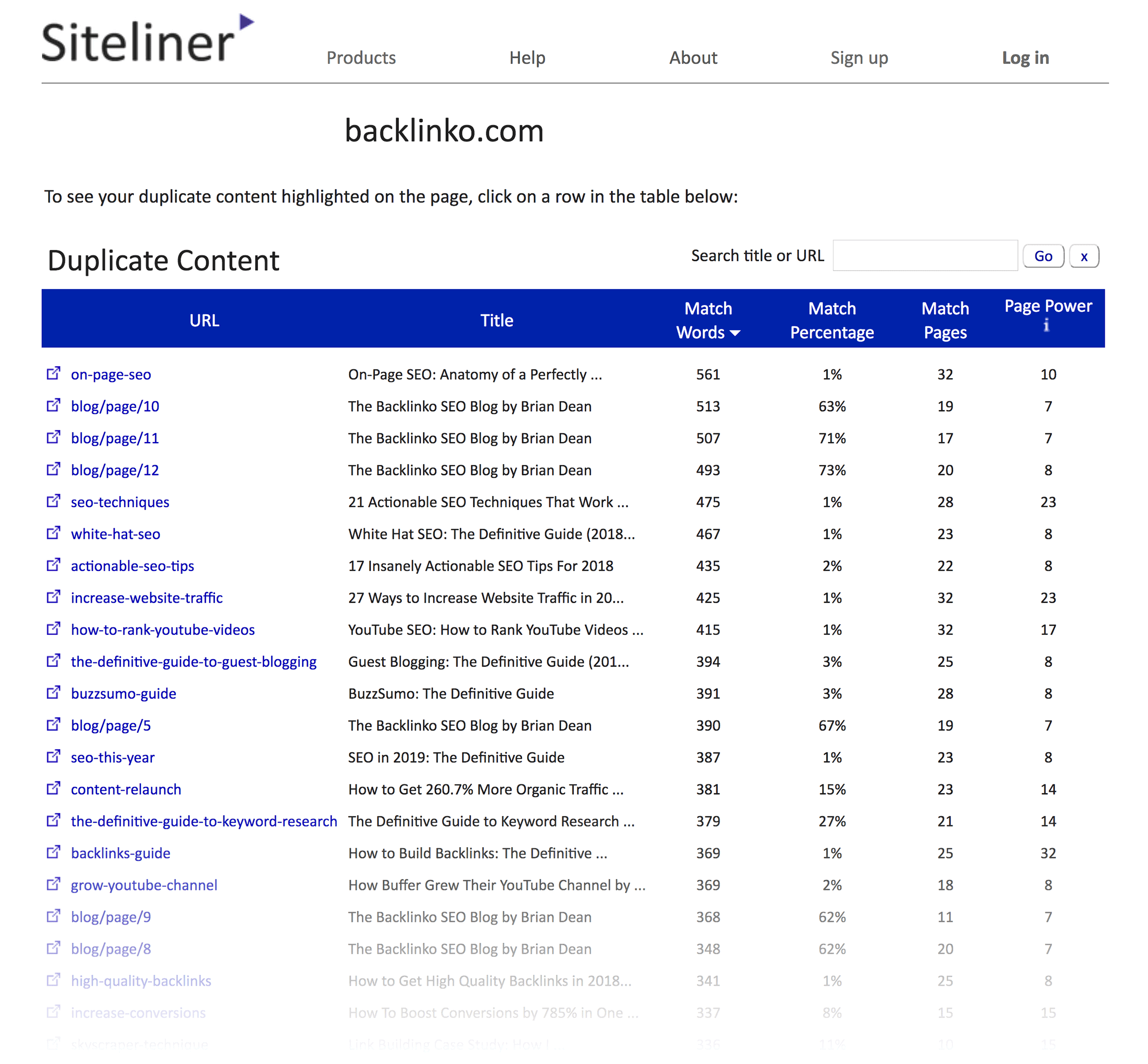

There are a handful of SEO tools that have features designed to spot duplicate content.

For example, Siteliner scans your website for pages that contain lots of duplicate content.

Consolidate Pages

Like I mentioned, if you have lots of pages with straight up duplicate content, you probably want to redirect them to one page.

(Or use the canonical tag)

But what if you have pages with similar content?

Well, you can grind out unique content for every page… OR consolidate them into one mega page.

For example, let’s say that you have 3 blog posts on your site that are technically different… but the content is pretty much the same.

You can combine those 3 posts into one amazing blog post that’s 100% unique.

Because you removed some duplicate content from your site, that page should rank better than the other 3 pages combined.

Noindex WordPress Tag or Category Pages

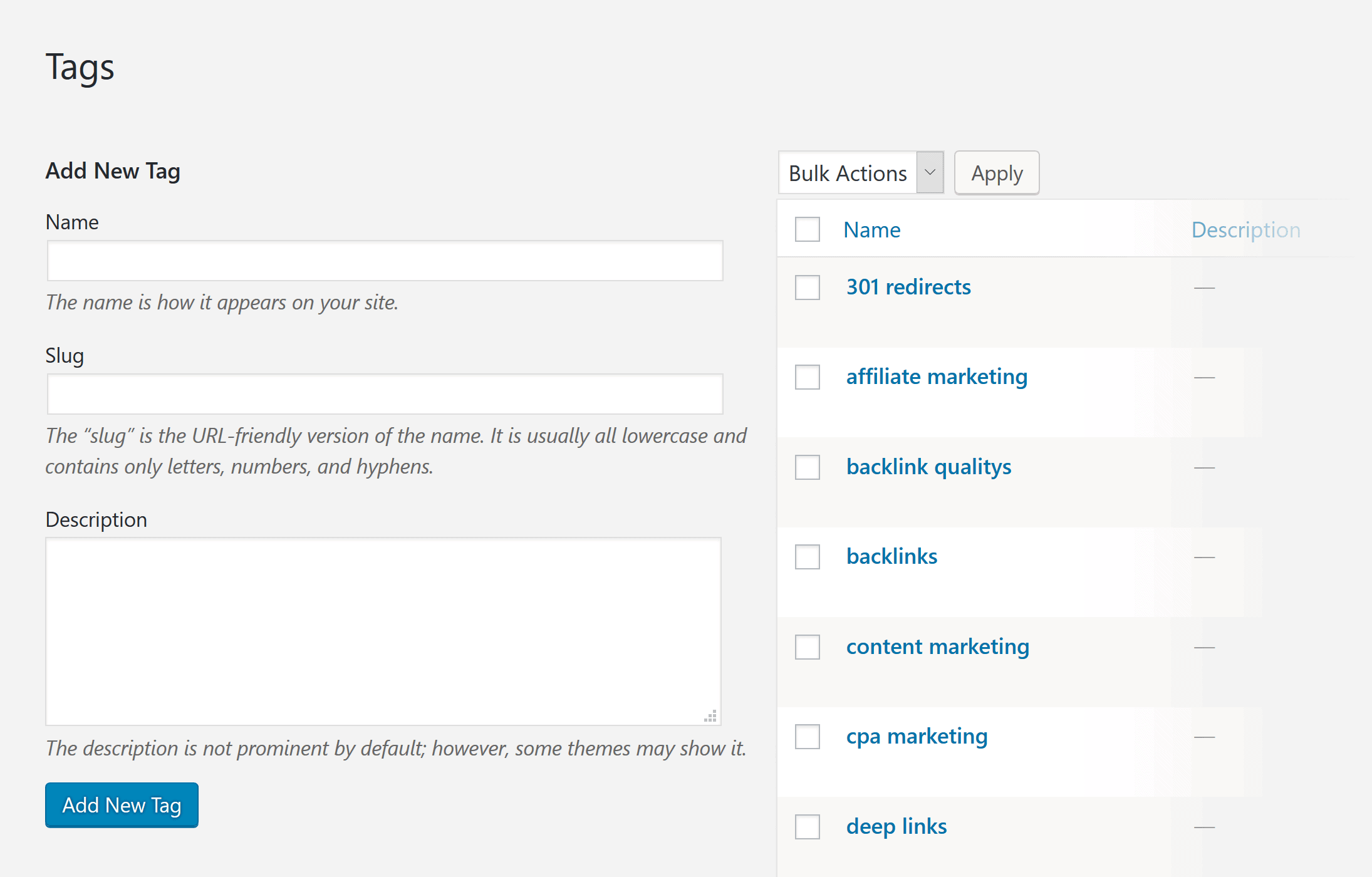

If you use WordPress you might have noticed that it automatically generates tag and category pages.

These pages are HUGE sources of duplicate content.

So they’re useful to users, I recommend adding the “noindex” tag to these pages. That way, they can exist without search engines indexing them.

You can also set things in WordPress up so these pages don’t get generated at all.

Learn More

How does Google handle duplicate content?: A video from Google’s Matt Cutts on how Google views duplicate content.

The myth of the duplicate content penalty: This post outlines why most people don’t need to worry about a “duplicate content penalty”.